Text-to-video AI is reshaping how creators, marketers, and storytellers make visual content. Instead of shooting, editing, and rendering the old-fashioned way, you can now describe a scene and let AI generate a compelling video — often with sound, motion, and style baked in. Below are six powerful text-to-video models worth exploring if you’re serious about AI video production today.

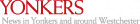

1. Pollo AI – All-In-One Video Creation Hub

Pollo AI is a full creative suite aimed at simplifying video creation from start to finish. This platform is built around the idea of turning textual prompts into engaging, ready-to-publish videos without manual editing. It’s particularly attractive for social media creators, small agencies, and solo marketers who want quick, polished output without a steep learning curve.

What sets Pollo AI apart is its integration of multiple leading video models under one roof. Rather than locking you into a single generation algorithm, it lets you pick from a range of engines — including Kling AI, Runway, and more — to match different styles and storytelling needs. This flexibility means you can experiment with cinematic scenes, animated shorts, character-driven pieces, or ambient clips with just one interface.

Alongside text to video AI, Pollo AI offers mobile and web apps, templates for short-form content, avatar generation, and even editing tools for enhancing or restyling videos after generation. That breadth makes it feel more like an all-in-one creative workstation rather than a single feature tool — ideal if you need fast turnaround and variety.

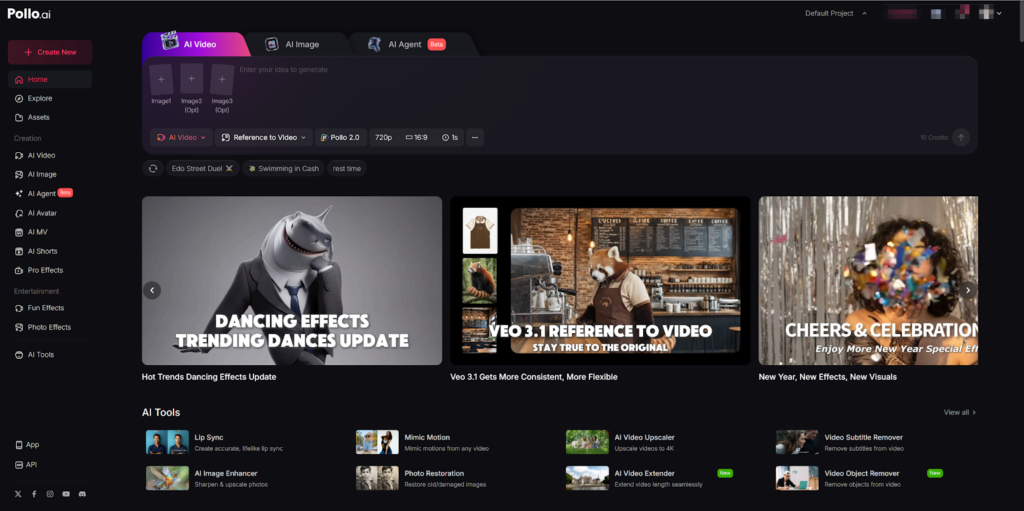

2. Runway AI – Creative Control and Visual Flexibility

Runway AI has been a consistent leader in AI video generation, offering highly customizable text-to-video models that focus on creative control. Its models — including Gen-2, Gen-3 Alpha, and even newer versions — let users construct scenes with adjustable camera angles, motion paths, and stylistic presets.

One reason Runway stands out is its camera and motion controls. You can specify directions like tilt, pan, or zoom directly in your text prompt, giving more expressive depth than most competitors. There are also style presets — think claymation, anime, or photorealistic aesthetics — so the same prompt can produce dramatically different visual results.

While some users find the prompt requirements a little finicky, this is often a trade-off for greater detail and control. Runway’s video generators typically produce short clips (around 4–5 seconds by default), though you can extend or chain these segments together in most workflows. It offers both free tier credits and paid plans for heavier usage.

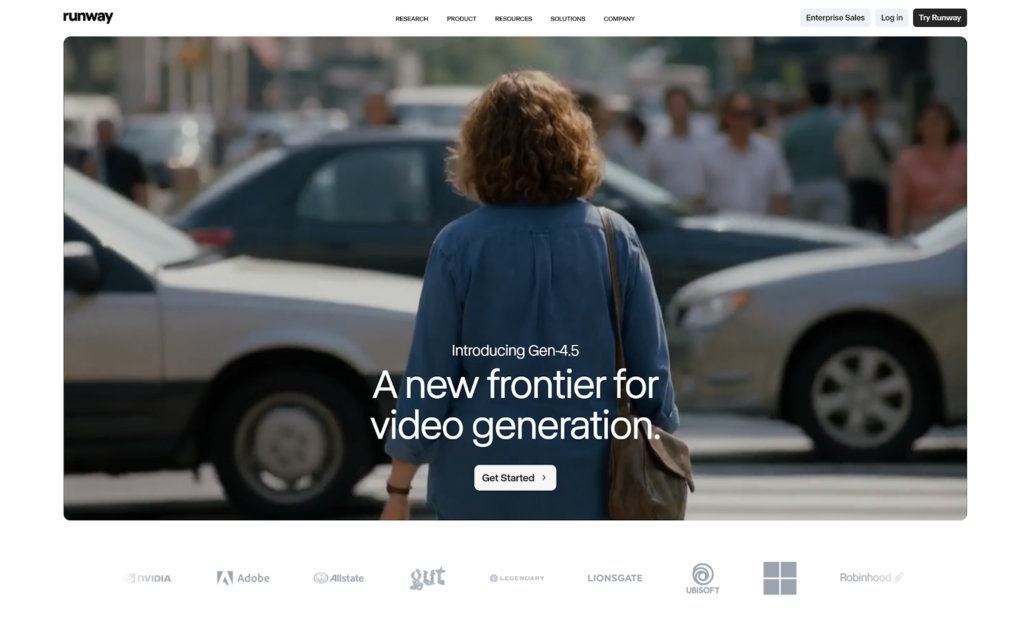

3. Sora – Social-Native, Accessible Video Generation

OpenAI’s Sora series has quickly become one of the more talked-about text-to-video tools thanks to its integration with social sharing features and accessible apps. It can generate short clips — often up to around a minute — based on detailed text prompts or even existing images.

Sora’s edge lies in ease of use and mainstream accessibility. Because it’s available through mobile apps in many regions and bundled with popular AI subscription plans, creators can experiment with video generation without specialized tools or APIs. The interface itself is optimized for fast, casual content creation — think quick scenes, creative experiments, or visually engaging social clips.

Despite its popularity, Sora has sparked discussions about training data and content rights, which is something to be aware of if you’re creating commercially oriented work at scale.

4. Google Veo – High-Fidelity and Audio-Integrated Output

Google’s Veo series is one of the most technically capable text-to-video models available. Designed by DeepMind, the latest releases (such as Veo 3.1) can produce longer video clips with synchronized audio, sound effects, and environmental sounds — not just silent visuals.

This strong integration of audio and visual coherence sets Veo apart from many competitors that focus solely on animation or visual generation. While it’s slightly more niche — often accessed through Google’s AI platforms or specific developer channels — it’s worth testing if you want high-quality clips or narrative output that feels film-like.

If you’re pushing toward professional-level visuals for ads, short films, or concept reels, Veo’s output can serve as a powerful foundation, though exploring it usually requires familiarizing yourself with Google’s broader AI ecosystem.

5. LTX-2 – Open-Source and Flexible Video Generation

LTX-2 comes from Lightricks and represents a different kind of text-to-video model: one focused on open access and flexibility. Unlike many tools tied to cloud platforms or proprietary UIs, LTX-2 is designed to be open-source and developer-friendly, enabling integrations into workflows where customization and control matter.

LTX-2 and its ecosystem variants let developers build more programmatic or automated video generation pipelines, making them useful for startups, creative tech teams, or studios that want to embed text-to-video generation directly into apps. Because it’s open, you can experiment with the model itself or fine-tune it for specialized outputs — something most hosted services don’t allow.

This combination of freedom and extensibility doesn’t always mean the slickest user experience out of the box, but for power users or developers, LTX-2 is a powerful building block rather than a finished product.

6. Dream Machine – Stylized and Motion-Focused Video Output

Dream Machine is another noteworthy text-to-video model that has gained attention for its ability to combine motion realism with stylistic nuance. Developed by Luma Labs, its outputs excel at capturing believable movement, though those results sometimes trade off a bit of transparency about how the model was trained.

The key appeal here is how it represents motion. Dream Machine’s models have been noted for generating sequences that feel dynamic, even when based on simple prompts. This makes it ideal for visuals where action or flow is more important than strict realism — think animated shorts, motion graphic clips, or stylized sequences.

There’s also a growing ecosystem around Dream Machine that includes subscription plans and expanding features for editing or extending videos after generation. For creators who are experimenting with non-photorealistic outputs or more artistic visuals, it’s a compelling alternative model to test alongside the others.

Conclusion

The text-to-video landscape in 2026 is rich with options, each with its own strengths. Pollo AI shines as a versatile all-around creator platform that doesn’t force you to learn many tools. Runway AI offers fine-grained control and stylistic depth. Sora is accessible and social, Google Veo pushes fidelity and audio inclusion, LTX-2 empowers developers, and Dream Machine adds artistic motion flair.

For anyone serious about integrating AI video into workflows — whether for marketing, storytelling, or creative experimentation — trying multiple models is the best way to find the right fit for your goals.